Data leakage moved to the browser. Your DLP didn't follow.

Data leakage in the AI era doesn't happen through email attachments. It happens through a paste into ChatGPT, an upload to an AI tool, a prompt that contains your product roadmap. Neon catches it all.

Traditional DLP was built for a world where data left through known channels — email, USB, file shares. But when your workforce's primary work surface is the browser, and AI tools are the new productivity layer, the old model is blind.

The prompt is the new exfiltration vector

An employee pastes source code into an AI prompt for review. Another uploads a financial model to an AI tool for analysis. Legacy DLP never catches it because the data never leaves through a monitored channel.

Intent matters more than pattern

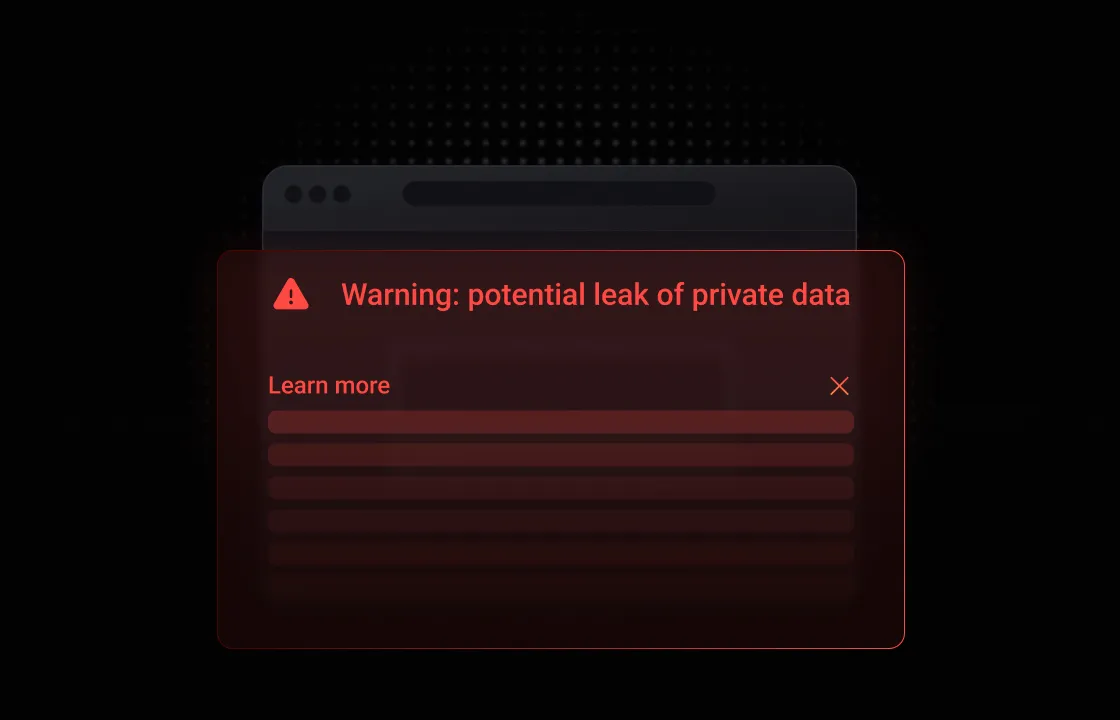

Regex-based DLP catches credit card numbers. It doesn't catch someone describing a confidential acquisition strategy in natural language to a chatbot. The risk isn't in the format — it's in the meaning.

Insider risk isn't always malicious

Most data leakage comes from well-intentioned employees trying to be productive. They're not exfiltrating — they're just using the fastest tool available without realizing the risk.

Data loss prevention that understands intent, not just patterns.

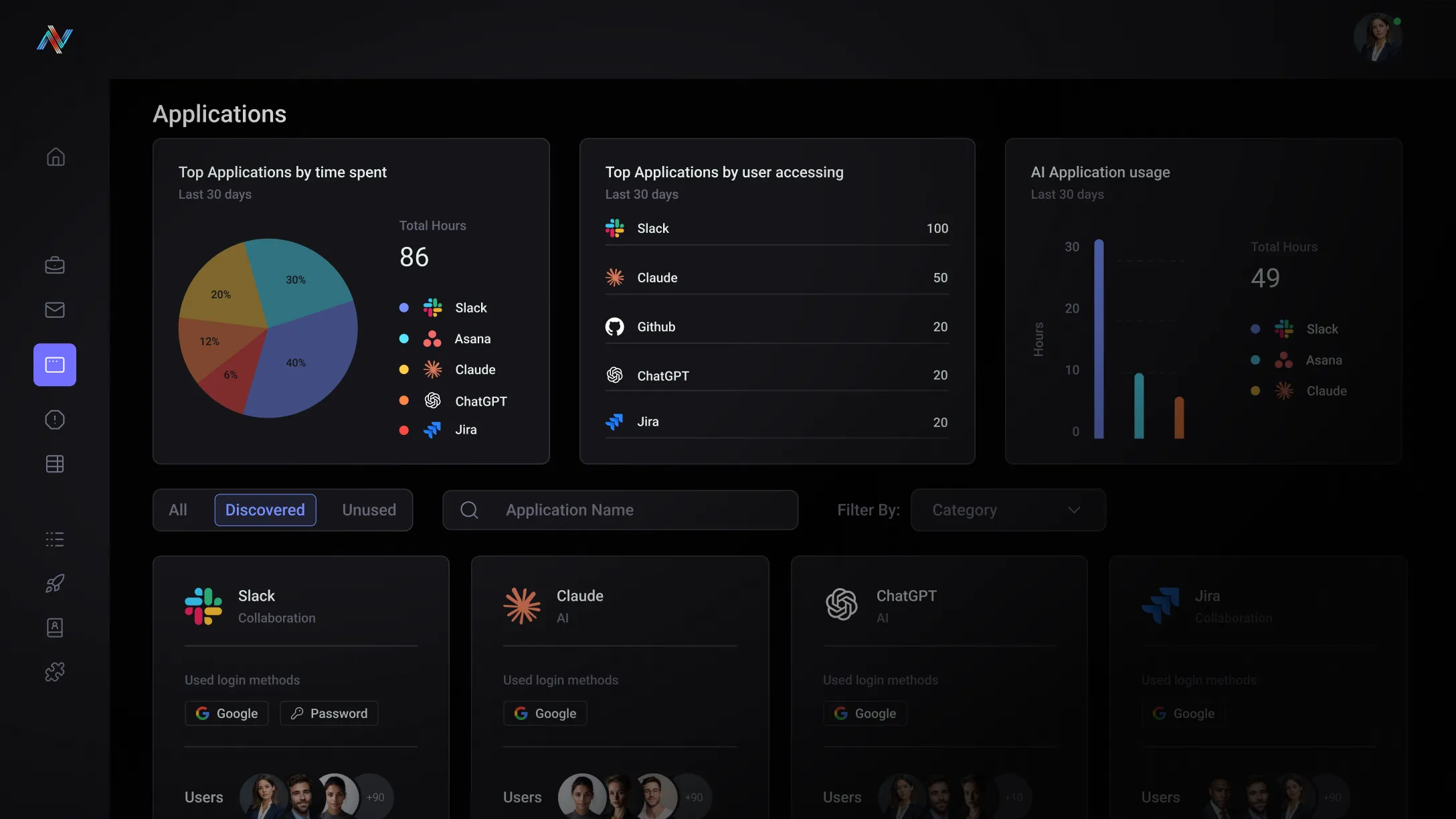

Neon analyzes interactions in the browser session — prompts, pastes, uploads, form submissions — understanding context of data movement and correlating that to expected behaviors, not just matching keywords.

In-browser context

Neon not only understands the movement of data in the browser but correlates this with identities and SaaS and AI applications. This in-browser context monitors, detects, and enforces policies when a user is copying, pasting, downloading, or uploading across the web.

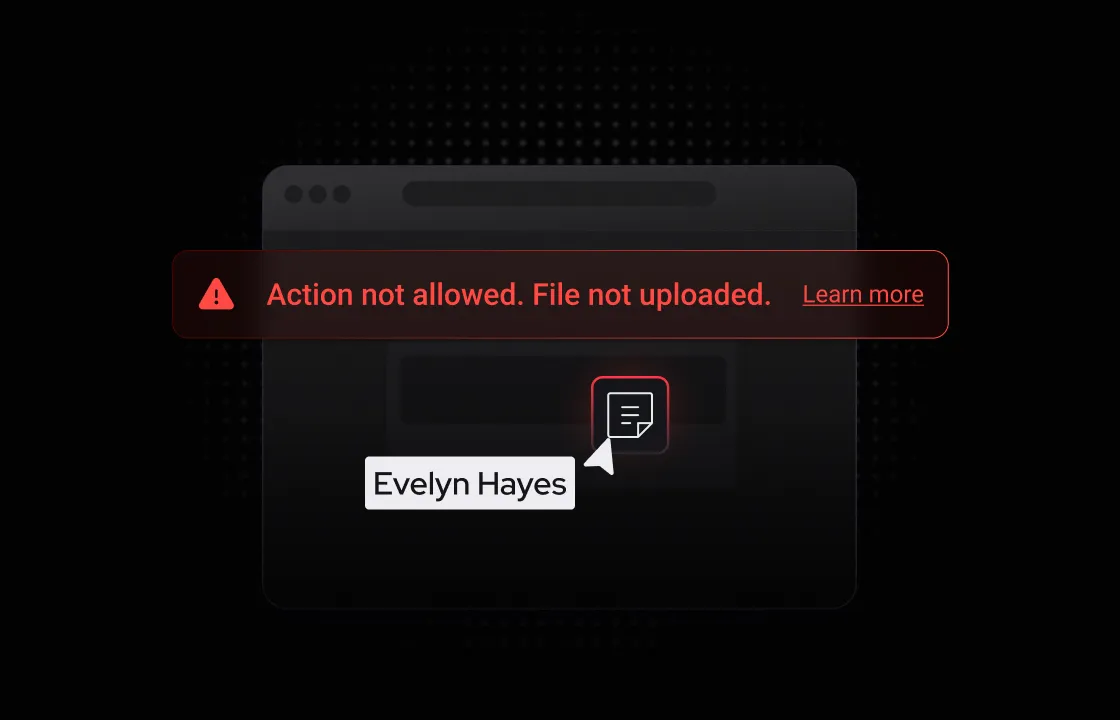

Point-of-action enforcement

Stop data leakage the moment before it happens — when the user clicks submit, paste, or upload. Not after the data has already left. Not in a log review the next morning.

Risk-aware user coaching

When someone's about to leak sensitive data, Neon doesn't just block — it explains why, offers alternatives, and builds organizational awareness one interaction at a time.

Built for your team

The Detection Gap

You know AI data leakage is happening but your current stack can't see it. Prompts aren't files. Pastes aren't emails. Your DLP was never designed for what's happening inside a browser tab.

The Evidence Problem

During audits you can't demonstrate what data was shared with AI tools or by whom. That gap is getting harder to defend as regulators start asking specific questions about AI governance.

Unlock the potential with Neon Cyber

Monitor every prompt, paste, and upload into any AI tool — the interactions that never pass through email, USB, or any channel your existing DLP watches.

Neon understands the movement of data in the browser, correlating this with identities and SaaS and AI applications.

Enforcement happens at the point of action — the click, the paste, the upload. Not in a post-incident log review 24 hours later.

Every detected interaction is logged with user, app, data classification, and action taken — ready for compliance reviews without any manual compilation.

Trusted by the best

Everything you need to know about Neon Cyber's capabilities and deployment

Traditional DLP was built for a world where sensitive data moved across managed channels — email attachments, file transfers, network traffic. It works by scanning for known data types and patterns before they cross a defined perimeter. That model was effective when the perimeter was clear. It isn't anymore.

The browser has become the primary environment where work happens — and where data leaks. When an employee pastes customer records into a ChatGPT prompt, uploads a contract to an unsanctioned SaaS tool, or enters credentials into an AI-generated phishing page, that interaction occurs inside an encrypted browser session. Traditional DLP — whether network-based, endpoint-based, or cloud-proxied — has no visibility into it. The data is gone before any alert fires.

Neon operates at the browser layer, where those interactions actually happen. It closes the enforcement gap that opens the moment a user opens a browser tab and starts typing. Your DLP handles what it was built for, but it is not technologically capable of managing data in the browser. Neon solves this exact problem.

Yes — and the way Neon does it is meaningfully different from how traditional data protection tools approach the problem.

Most DLP tools try to classify data before it moves — tagging files, scanning attachments, or inspecting traffic at the network layer. That model breaks down entirely when a user opens a browser tab and starts typing. There's no file to scan, no attachment to intercept, and no network signature to match against. The data leaves the organization as keystrokes inside an encrypted session, and conventional tools never see it.

Neon operates at the point of input — inside the browser session, at the moment a user interacts with a form or an AI tool's prompt field. Because Neon is constantly monitoring user behavior on the web, including which identities they're using to interact with apps, Neon can prevent sensitive data from ever being transmitted.

When a violation is detected, Neon can respond in several ways depending on how your policies are configured:

- Warn — deliver a real-time, in-browser alert that gives the user context about why the action is risky, allowing them to make an informed decision

- Block — prevent the submission from completing before sensitive data reaches the AI tool

- Capture — log the full event with forensic detail for your security and compliance team, even in cases where blocking isn't the right response

Coverage extends across sanctioned AI tools and unsanctioned ones alike — including tools your IDP has never seen and your DLP has no policy for.

Neon works across all browser-based AI tools (e.g., ChatGPT, Gemini, Claude, Lovable, n8n, make, Gumloop, etc.), including AI browsers like Comet or Atlas.

Neon can discover which AI apps your users are accessing and with what credentials; then you can monitor and control the actions your users can complete with that app.

For example, if your marketing team starts to use Lovable to generate an ROI calculator for prospects, you can limit the upload of any files to reduce the possibility of users uploading confidential contract information to the tool to parse.

Protect your data where it actually moves — the browser.