Blocking doesn't change behavior. Guidance does.

Every blocked action is a missed chance to teach. Neon replaces static blocklists with real-time, context-aware coaching that makes your workforce smarter about risk — not frustrated by it.

The security industry's default response to AI risk is to block it. But blocking creates workarounds, frustrates your best people, and teaches nothing. The workforce doesn't need a cage. It needs a coach.

Blocking breeds shadow AI

Lock down one AI tool and your workforce finds another you don't know about. The risk doesn't disappear — it moves somewhere you can't monitor. And the resentment toward security grows.

Static rules can't handle nuance

A blanket block on pasting into AI tools stops the intern sharing sensitive data and the engineer testing public documentation alike. No context. No proportionality. No intelligence.

Annual training doesn't stick

A once-a-year security awareness module doesn't change daily behavior. By the time someone encounters a real risk scenario, the training is a distant memory. Context-free education fails.

Real-time guardrails that educate, not just enforce.

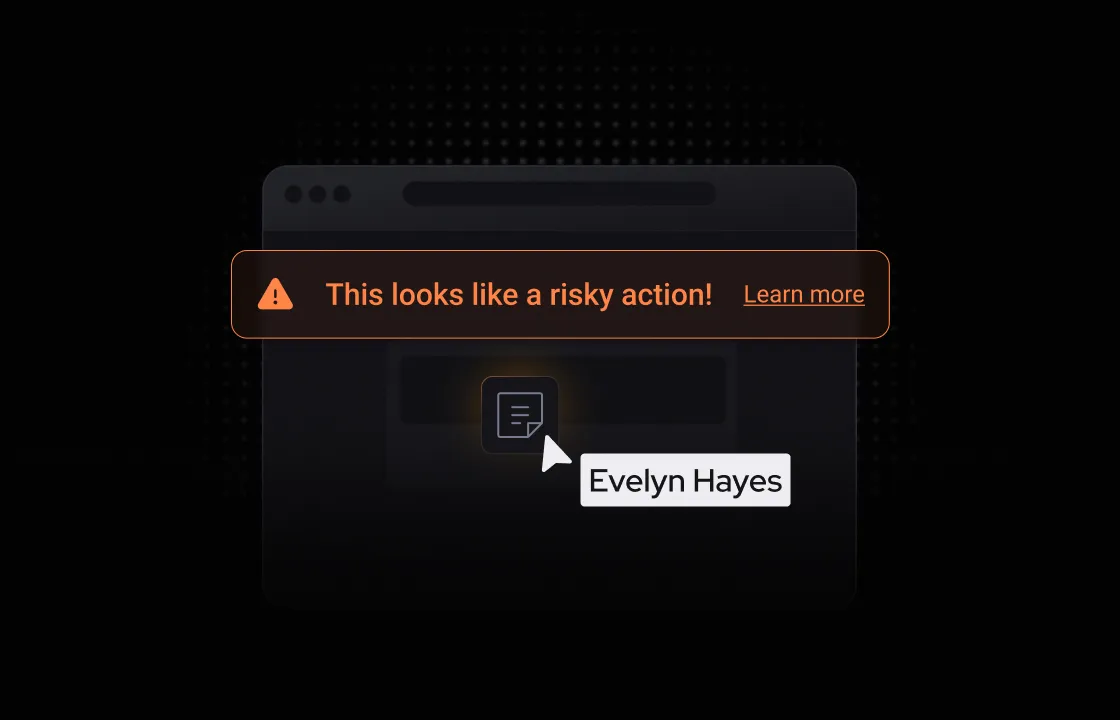

Neon intercepts risky behavior at the exact moment it happens and delivers context-aware guidance — explaining the risk, suggesting alternatives, and letting the user make an informed choice. Security becomes a coach, not a wall.

Contextual nudges

When a user is about to paste sensitive data into an AI tool, Neon explains what's at risk, why it matters, and what they should do instead — right there in the browser, right when it matters.

Behavior-based policies

Replace blanket blocks with intelligent rules that trigger based on what's happening — the sensitivity of the data, the risk profile of the destination, and the user's history. Block only when you must.

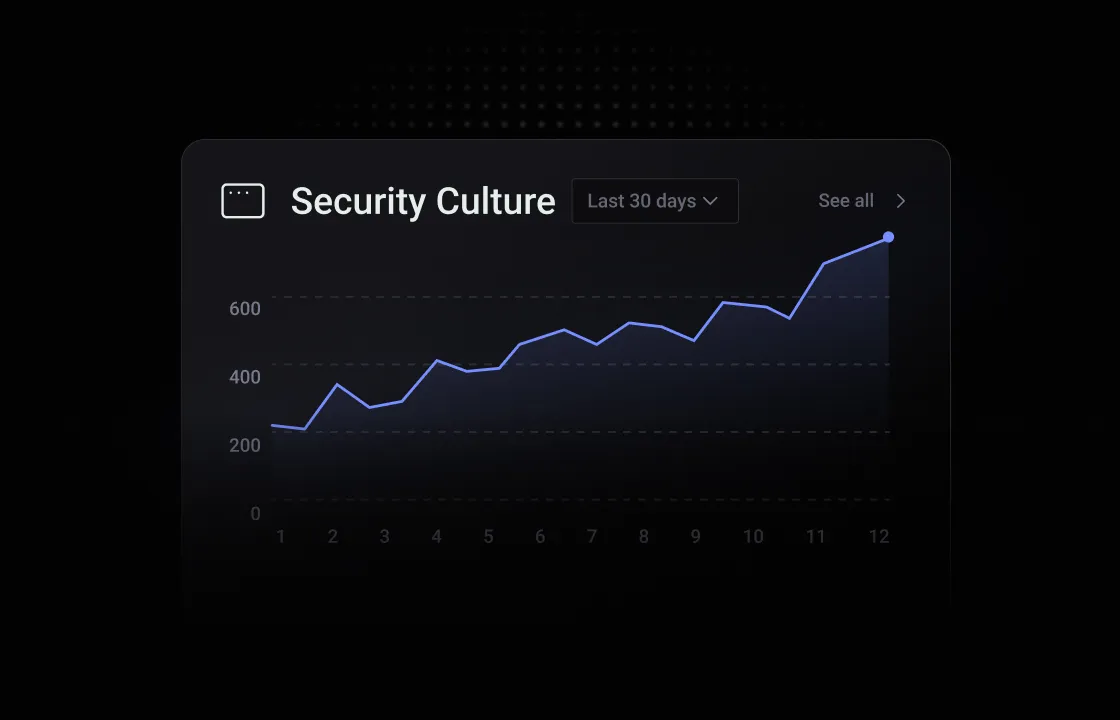

Measurable culture change

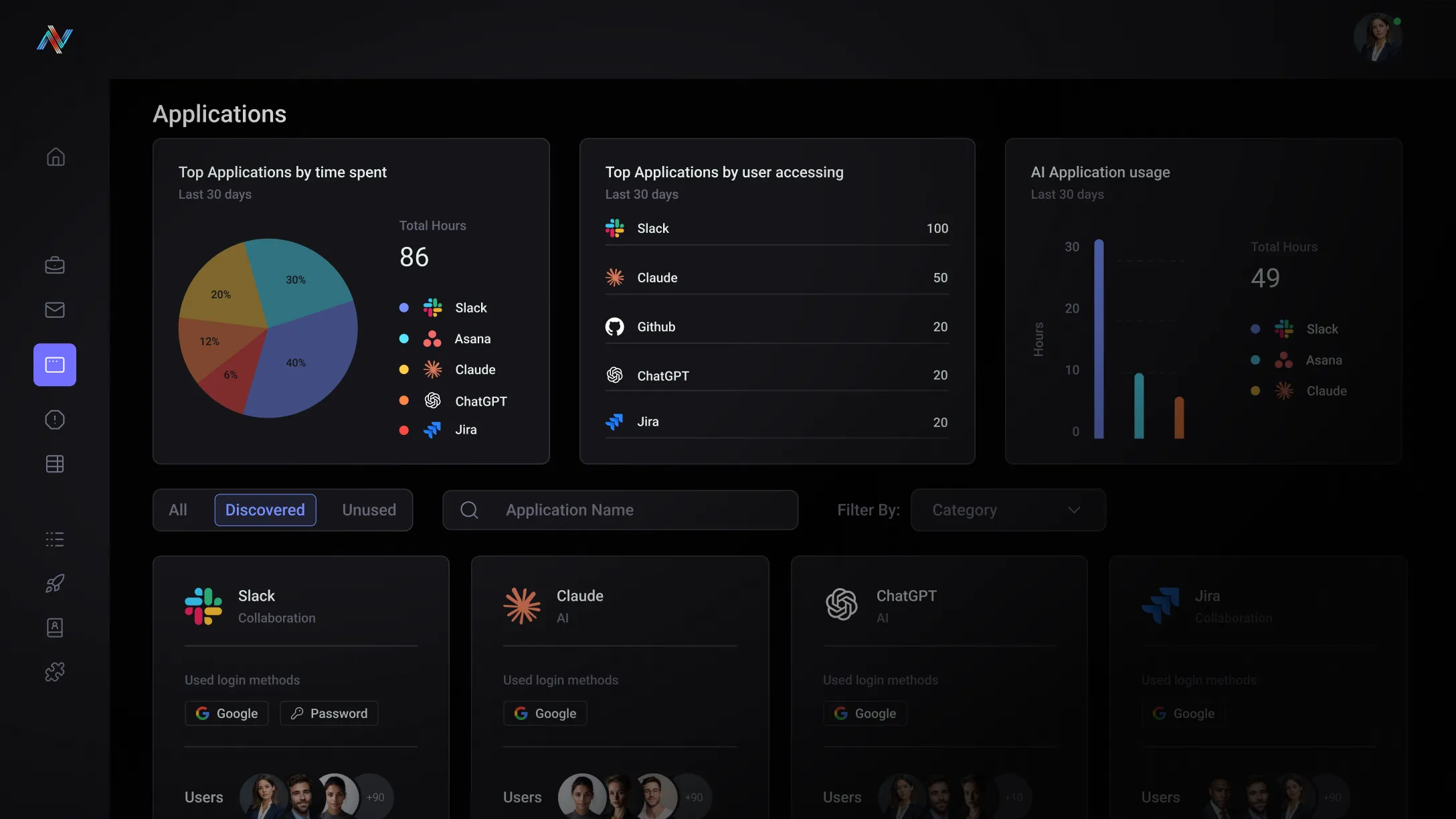

Track how user behavior evolves over time. Identify which teams are learning, where repeat violations concentrate, and how security awareness is improving across the organization.

Built for your team

The Blunt Instrument Problem

Every time you block an AI tool, you get pushback from the business and shadow usage climbs. You need controls that are proportional to the actual risk — not policies that treat every user as a threat.

The Enforcement Gap

You're responsible for deploying AI governance but your current tools have no session-level context. A block is a block. There's no way to coach, explain, or differentiate — just allow or deny.

Unlock the potential with Neon Cyber

Coaching at the point of risk reduces unsafe behavior without blocking the tools your teams need to stay productive.

Policies trigger based on data sensitivity, app risk, and user context — not blanket rules that create friction for everyone equally.

In-the-moment guidance is exponentially more effective than annual training. Users learn in context, at the exact moment the lesson is relevant.

Track how risk behavior changes across teams and individuals. Demonstrate a culture of security awareness with real data, not self-reported surveys.

Trusted by the best

Everything you need to know about Neon Cyber's capabilities and deployment

Blocking AI tools doesn't eliminate the risk — it relocates it. When employees can't access the AI tools through official channels, they find alternatives: personal devices, personal accounts, free-tier applications with no enterprise data controls, and tools your security team will never see. The risk doesn't go away. It just moves outside your visibility entirely.

Coaching works because the vast majority of AI-related data incidents aren't malicious — they're accidental. An employee who doesn't realize a client name in a ChatGPT prompt could violate a data handling policy isn't a threat actor. They're a person who needed a five-second reminder at the moment it mattered.

Neon's real-time guidance model is built on this principle. When a user takes an action that triggers a policy — uploading a file to an AI tool, submitting sensitive data into a prompt, bypassing an authentication requirement — Neon intervenes with a context-aware warning that explains why the action is risky and what the policy requires.

Blocking has its place. For verified threats, high-risk applications, and clear policy violations where user discretion isn't appropriate, Neon can and does block. The point is that blocking should be a precise tool applied where it's warranted — not a default posture that pushes risk into the shadows.

Yes — and this granularity is one of the most important capabilities for organizations where AI use looks fundamentally different across departments. A legal team handling privileged documents has different risk exposure than a marketing team experimenting with AI copywriting tools.

Neon's policies can be configured at the level of individual users, user groups, departments, roles, specific applications, data types, and authentication patterns. This means your security team can define distinct guardrails for each segment of the organization — allowing certain AI tools for one team while blocking them for another, warning one group about a behavior while automatically escalating the same behavior for a higher-risk population.

Policies can also be applied progressively. Organizations commonly begin in monitor mode to understand baseline behavior across teams before configuring enforcement — using the visibility Neon provides to build policies grounded in how employees actually work, rather than how policy documents assume they do.

Neon captures a structured event record every time a policy is triggered — including the user, application, action, data type, and outcome. Over time, that event data creates a measurable picture of how behavior across your organization is evolving in response to real-time guidance.

At the user level, security teams can track whether individuals who received a warning subsequently avoided the same behavior — and whether repeat triggers indicate a user who needs additional intervention or a policy that needs refinement.

At the organizational level, trend data across policy trigger frequency, by team and application, gives security leaders a quantifiable view of where AI risk is concentrated and whether guidance is having the intended effect.

This matters for security leaders who need to demonstrate program effectiveness to the board or to auditors — moving from "we have a policy" to "here is evidence that our policy is changing behavior and reducing incident frequency over time."

No — and it shouldn't. Security awareness training and real-time behavioral guidance solve different problems, and the organizations getting the most out of Neon have both.

Training programs build foundational knowledge: they teach employees what phishing looks like, why data handling policies exist, and what their responsibilities are under compliance frameworks. That foundation matters. But training has a well-documented retention problem.

Real-time guidance is the reinforcement layer that training can't provide on its own. When Neon delivers a context-aware warning at the exact moment an employee is about to copy and paste sensitive data to an AI tool, it's not replacing what they learned in training — it's activating it, at the moment it's relevant.

The practical result is that security awareness training and Neon's guardrails make each other more effective. Training gives employees the conceptual framework; Neon applies it in real time, reinforces it consistently, and provides your security team with the data to know where training gaps still exist and where additional education is needed.

Build a security-aware workforce. One interaction at a time.